Disavowing links has been a hotly debated topic within SEO circles for many years now.

Many SEO experts are unsure whether they still need to submit disavows for low quality links or whether this practice could actually have a negative impact on their website ranking, as Google suggest.

Regardless of the debate, Google’s algorithms do still use quality of links as a way to rank websites and they do still hand out penalties if the site doesn’t comply with their tight guidelines.

This means that it’s just as important in 2019 to audit links and file a disavow with Google when it’s negatively impacting on your website ranking.

In this article, we’re going to look closer at the debate in the SEO industry, remind ourselves what manual actions are, and understand which sites we should still disavow.

Why the debate?

To clear up the debate, we need to travel back in time to those days when Britney Spears shaved her head, ‘to google’ was officially declared a verb and MySpace was the best thing since sliced bread. We’re talking about the early days of the internet as we know it- from the late 90s to the early 2000s.

During that time, SEO was still in its infancy and people did what they could to get their webpages to rank highly. This involved a lot of spammy practices such as keyword stuffing and hidden text on the website.

PageRank

Google had always set itself ahead of the competition by focussing on link quality, so it created PageRank. The thinking was that if there were lots of links to a website, then it must be of high quality. But people simply added their details to directories, left comments on website and paid for links so the algorithm didn’t work as effectively as hoped.

The Penguin algorithm & the disavow tool

Once the Penguin algorithm update went live in April 2012, Google could now penalise your website for having low quality or spammy links. Linking practices changed completely and many websites found that their traffic sharply declined overnight. The only way to get around this was by going in and removing these links.

To help webmasters to recover and remove these links, Google created the disavow tool. By uploading a text file, you could ask Google to stop looking at the links of your choice and so your web traffic could recover.

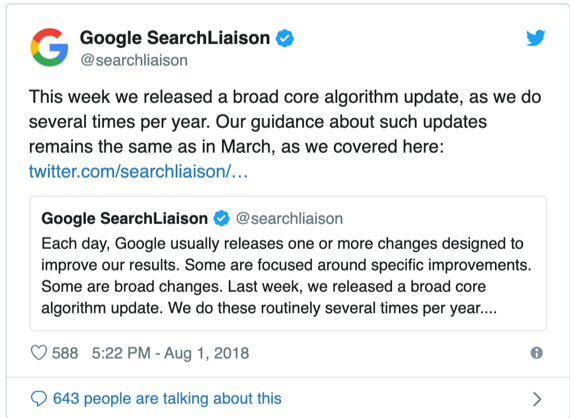

Penguin 4.0

‘Problem solved!’ you’re probably thinking. But the case isn’t quite so straightforward because in September 2016, Penguin 4.0 was released. This meant that Google would no longer penalise your site for low quality links but instead devalue them.

According to Google, you wouldn’t need to submit a disavow unless you’d been actively dealt a manual action (more on that below) or you were actively trying to prevent one.

Basically, if you hadn’t suffered from problems arising from your links and you didn’t have cause for concern, there wouldn’t be any need to do anything.

Google’s current position on disavowing links

Over the years since, Google has stated that disavows don’t help websites, but there has always been some confusion as to whether that’s correct.

But in a Google Help Hangout this year, John Mueller from Google stated that disavowing can actually help some websites, especially when there are ‘bad links’ that haven’t resulted in a manual action.

“…So, it’s something where our algorithms when we look at it and they see, oh, there are a bunch of really bad links here. Then maybe they’ll be a bit more cautious with regards to the links in general for the website. So, if you clean that up, then the algorithms look at it and say, oh, there’s– there’s kind of– it’s OK. It’s not bad.”

To clarify, this means that it’s still worth disavowing your links, even if you haven’t received a manual action yet. Unnatural links can still influence the trust that Google places in your site and affect your ranking.

Having said that, Google still isn’t a fan of allowing us to use the disavow tool and has ensured that it’s hard to find in the Google Search Console. The thinking is that people are disavowing too many links, and according to Gary Illyes of Google, “If you don’t know what you’re doing, you can shoot yourself in the foot.”

What is a manual action?

Much of the debate centres of the idea of a manual action, so it’s worth quickly recapping what that actually is.

As the name suggests, a manual action is taken by a Google staff member and lets you know that Google isn’t happy with some of your content. It tells you that it will omit or demote certain pages of your website from its search results.

The content and pages that can be penalised in this way include:

- Unnatural links to your site: Just like it says on the tin, this refers to both inbound and outbound links which don’t seem quite right.

- Thin content: This includes content created purely to promote, auto-generated content and content created by guest posters that don’t add value.

- Hidden text and keyword stuffing: Keywords and text that appear too often and aren’t always visible to reader.

- Clocking and suspicious redirects: Hidden content and conditional redirects fall into this category.

- Use-generated spam: Spammy blog comments, forum posts and profiles that are spammy.

- Spammy freehosts: If your website is hosted with other spammy websites, Google might paint you with the same brush.

- Spammy structured markup: Does your markup match your site? If not, Google is likely to penalise you.

You can find out if you are subject to a manual action by going to Google Search Console and looking under ‘manual actions’.

Should you disavow your links?

This is a difficult question to answer. It all depends on just how bad those links and whether it is having a significant impact upon your organic search traffic.

Disavowing should always be done with care, and only once you’ve done everything else you can to remove the link manually.

It’s a serious action to take and as Google say, ‘This is an advanced feature and should only be used with caution. If used incorrectly, this feature can potentially harm your site’s performance in Google’s search results.’

In essence, if you use the disavow tool incorrectly, you could harm your SEO.

If you discover spammy, artificial, or low-quality links pointing back toward your site or you have received a manual action, you should first reach out to the owner on the website in question and ask for the link to be removed.

Usually this is as simple as visiting their ‘contact us’ page on the website or searching for them on social media. Then you can send them a polite email asking for them to remove the link. Only then should you consider disavowing your links.

What about when there’s not a manual action against you?

It gets trickier still if you don’t have a manual action against you, yet you’re still concerned about the quality of your links.

The best way to start is by conducting a link audit that can shed light on the situation. From there, you can go through the links to check their quality.

Don’t worry too much about those random low-quality links that can often appear because Google can happily ignore them. Google will only issue a manual action for low quality links that you or your SEO team are responsible for.

Instead focus on those linking practices which violate Google’s terms.

This includes things like:

- Paid links or link schemes

- Paid articles containing links

- Publishing articles containing links to other sites (often through guest posting)

- Product reviews and links which offer free products

- Excessive use of reciprocal linking

- Widgets that require linking

- A high number of suspicious anchor texts

It’s also worth considering non-editorial links such as:

- Malware

- Cloaked sites (show google one set of results but the user a different set)

- Suspicious 404s

- Pills, poker and porn

You can often use your common sense to decide how bad a link is and whether to disavow. If there’s a borderline case and it seems like a matter of time before you receive a manual action, it might be worth disavowing anyway.

Generally speaking, if these links only relate to pages that you don’t care about and that don’t generate revenue for your business and they don’t negatively impact upon your organic search traffic and rankings as a whole, then you might not need to worry. You could simply delete the page altogether and move on.

Conclusion: Disavowing links and the future of Google algorithms

Maintaining high quality links and disavowing those which are low quality remains just as important in 2019 as it ever has.

The continual advancement of algorithms such as Penguin 4.0 can minimise the problem, but there will always be cases which slip through the net and negatively affect your website ranking.

The key remains to focus on creating high quality outbound links on your website, regularly monitoring the quality of incoming links via the Google Search Console or your SEO team and disavowing those which are harming your online presence.